3D Pointclouds

Enables a robot to steer safely through unknown and potentially dangerous 3D environments

In this article:

- 3D Point Cloud Stitching Enhances Robotic Navigation: Waygate Technologies developed a 3D laser point cloud stitching solution to enable autonomous robots to safely navigate complex and hazardous industrial environments

- Real-Time Environmental Mapping: The system continuously captures and merges 3D laser scans into a cohesive point cloud, creating a detailed digital twin of the robot’s surroundings for precise path planning

- Improved Safety in Confined Spaces: By providing accurate spatial awareness, the technology reduces the risk of collisions and enhances operational safety in confined or unknown environments

- Supports Autonomous and Remote Operations: The stitched point cloud data enables both autonomous navigation and remote operator control, making it ideal for inspections in inaccessible or dangerous areas

- Scalable for Industrial Applications: This technology is adaptable across various industries, including oil and gas, power generation, and infrastructure, where robotic inspection and mapping are critical

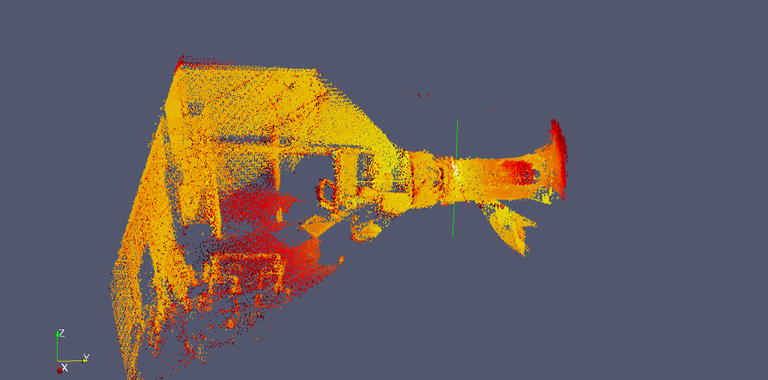

The robot carries a rotating 3D Laser Range Finder (specially developed for this application). The laser data, consisting of 3D point clouds is transmitted to a control PC where it is processed. The processing involves matching new data to previously collected points in such a way that a coherent point cloud results which is correctly representing the environment. On the PC, a viewer application allows the operator to observe this representation in a convenient way.

Pointcloud

3D Laser Range finder creates a pointcloud of the environment. By moving the robot, several pointclouds are created from different positions. This gives a “denser” pointcloud with more information.

Sensor Data

Robot sensor data like IMU (inertia measurement unit) and encoder data (wheel rotations) are added to the model as additional information source.

Data Processing

Advanced algorithms process all data from the stitched pointcloud and the onboard sensor data to calculate the exact position in the 3D space.

The navigation system has a modular architecture. This is mainly possible due to the use of Ethernet for communications. Depending on which modules are mounted / used, the navigation filter on the PC computer can provide a better or rather rough localization estimation. The software architecture is based on individually coded modules which communicate over inter-process channels in order to share information.

· The motor control software (which includes the navigation filter)

· Laser point cloud processor

· The 3D visualization module

· The video recording and playback module

The navigation modules communicate to a control PC over Ethernet. The data is evaluated by software directly on the PC. The robot control software also runs on the PC and sends motion commands to the on-board motor controllers over Ethernet.

Find out how our products and services can help in your business. Reach out to our sales representatives or ask questions to the technical experts.